New Automated QA Feature Update Helps You Improve Auto Score Card Accuracy

A few weeks ago, we compared manual agent evaluation vs. software-supported agent scoring vs. automated call scoring for quality assurance. The conclusion was that while manual scoring usually leads to more accurate results than auto scoring, it is very labor- and time-intensive, resulting in only 1-3% of the calls being manually evaluated. Therefore, scoring 100% of the calls using automatic call scoring — in addition to manually scoring calls — is required to get a complete picture.

While we are very proud of the accuracy of MiaRec's Auto Score Card, we also know that the results achieved largely depend on our customers' ability to correctly configure and fine-tune the evaluation criteria to improve its accuracy over time.

In today's post, we are thrilled to announce new capabilities that not only allow you to very easily identify and eliminate discrepancies between manual and automatic agent evaluation, but also to mix the two in one form, giving you much greater flexibility.

Unifying Software-Supported Agent Evaluation & AI-Driven Auto Score Cards

Creating and managing your agent evaluation forms is now easier than ever. You create the form once in the Agent Evaluation Form Designer that's in the MiaRec Administration panel, regardless of whether you want to use it for a software-supported evaluation (filled out by a supervisor while listening to a call) or AI-powered automatic quality management (automatically done by MiaRec using Generative AI technology).

.png?width=640&height=360&name=Screenshots%20(18).png)

Screenshot of editing custom criteria within the MiaRec Evaluation Form Designer feature.

With a click of a button, you can switch the form to be used as an Auto Score Card or used by supervisors for their evaluation. In addition, you can assign specific questions to be evaluated automatically (through Auto Score Card), while others will remain for supervisors to answer. For example, use Auto Score Card to check for easily identifiable, standard phrases, e.g., whether or not an agent states their name, thanks the customer for calling, and verifies the caller's name. For trickier or more intricate quality assurance criteria (e.g., Did the agent show empathy?), you can use MiaRec's software-supported agent evaluation.

Ability To Override Auto Score Card Evaluations Manually

MiaRec's AI-driven Auto Score Card empowers you to evaluate all of your calls with unrivaled precision, thanks to the cutting-edge technologies of Natural Language Processing (NLP), machine learning, and Generative Artificial Intelligence (AI). Not only does it deliver remarkable accuracy, but it also boasts remarkable flexibility in configuration, eliminating the need for an engineering background to customize your scorecard forms.

Autoscoring saves a contact center hundreds of hours every month and does not require administrators and/or supervisors to continuously fine-tune the keywords and expression syntax that the autoscoring is based on. Based on Generative AI technology I allows, MiaRec AI is able to understand context, without looking for specific keyword combinations.

With this new feature update, you can now manually override the Auto Score Card evaluation if such discrepancies are found, resulting in more accurate evaluations. Any overridden score will be marked with an asterisk.

Fine-tune AI-Driven Auto Score Card Over Time More Efficiently

In addition to the ability to override single scores, MiaRec now makes it easier to identify and eliminate causes for poor autoscoring. For this, MiaRec provides you now with a report that shows the manual and Auto Scores side by side. A large discrepancy between manual and auto scores usually means one of two things: you are either not scoring enough manually, or you need to improve your Auto Scoring criteria.

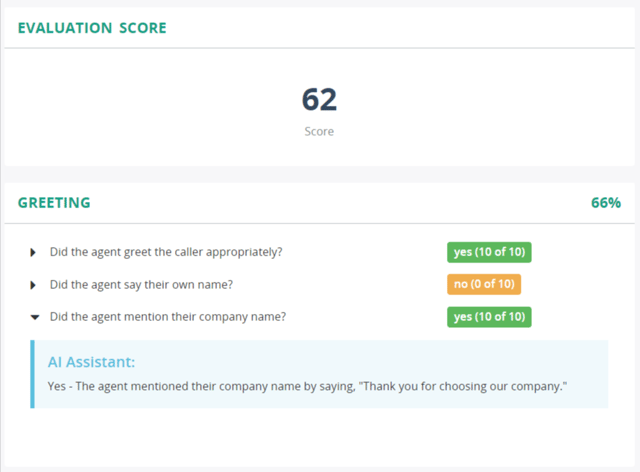

Screenshot of Automatic Score Card with score sectional breakdown including customizable criteria

In another report, you can see all the areas where you have overridden autoscoring results. This helps you very quickly identify any fine-tuning and improvements you should make to the keywords/phrases you have used or to the keyword expression syntax you have implemented. This is especially important in the first two months of launching a new evaluation form, but should be done regularly.

With these new capabilities, improving your Auto Score Card will only take minutes once in a while, but will save you dozens of hours of manually evaluating your agents' performance.

You May Also Like

These Related Stories

Building Call Center Efficiency Through Call Recording

How MiaRec Helps BPOs Overcome 5 Common Challenges

.png)